Live sound spatialisation: I

Building an interface for multichannel sound diffusion

Sound diffusion across multiple speakers is an art form, especially where realtime control is concerned. In this post we showcase a live sound spatialisation interface created by Juan Duarte. “I” uses a CTAG board and Trill Sensors to create a flexible tool for controlling spatialisation during sound performances and installations.

Sounds objects in space

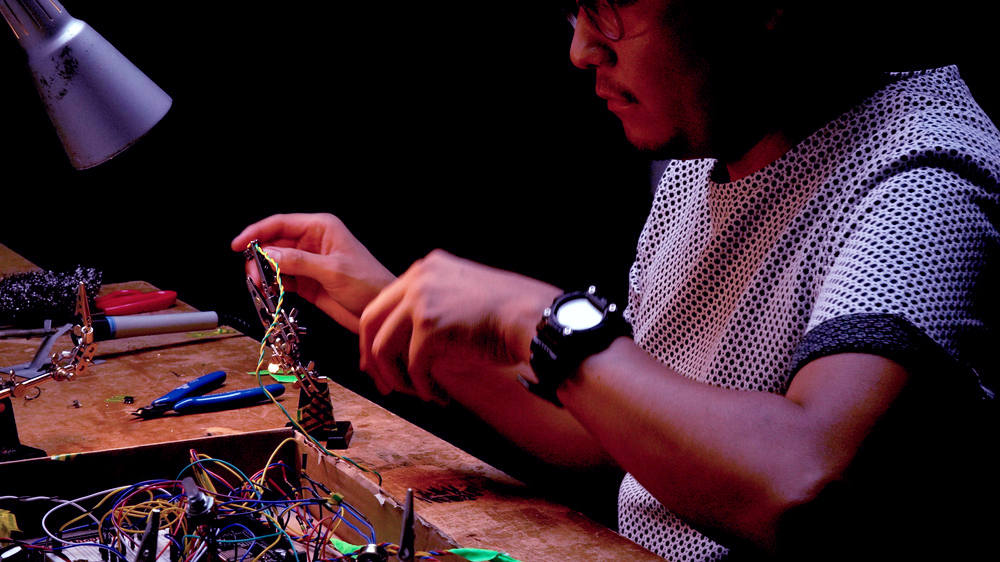

Since mid 2019 I have been working on a multichannel system for sound spatialisation, starting as a collaboration with Arnaud Riviere and Mario de Vega.

“I” diffuses sound using both manual and semi-automated modes. Sound can be spread around manually across multiple pairs of speakers via the interface’s touch sensors, while sounds can also be automatically transformed according to wavetables and generative sequences. These wavetables are flexible and can be transformed on the fly via the audio inputs. The inputs treat an audio signal like a control voltage for amplitude modulation of the diffusion.

An earlier prototype of I.

In addition, another set of Amplitude Modulations can be triggered as presets to modify all channel’s volume simultaneously. The system is broadly influenced by Ambisonics principles, however instead of using existing spatialisation libraries I devised a system based on my personal experience of using AM and FM synthesis methods in Pure Data.

Balanced XLR Inputs and Outputs

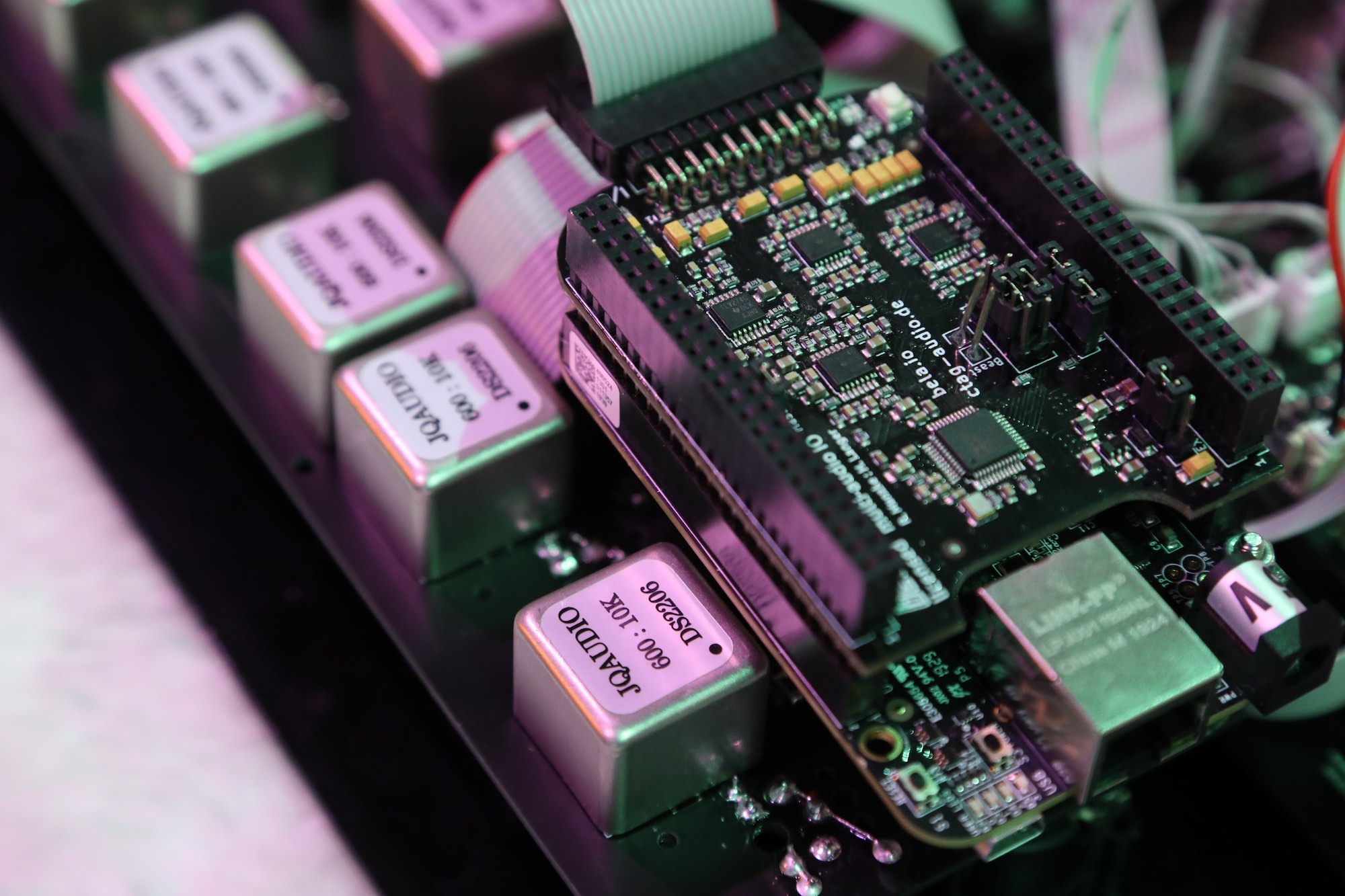

The system relies on eight outputs and four inputs which are provided by the CTAG Face Cape. The CTAG signals go into a circuit board which converts unbalanced to balanced signals, as balanced signals are required for using XLR outputs and inputs.

CTAG board and circuit for balanced signals.

With this setup it is possible to send sound directly to an active speaker without any mixer in between. The circuits I designed balance the output signals from the Bela using coil transformers. These passive components do not amplify or distort the initial signal, so they remove the need for DI Boxes on each of the outputs.

Touch Interface

The output position in the speaker array is controlled with a pair of Trill sensors. Due to their multitouch capability these sensors offer several position coordinates: when one finger is pressed it controls the panning between a pair of speakers, when using two fingers on each Trill it transforms the arrangement across the whole system.

Touch interface for spatialisation control.

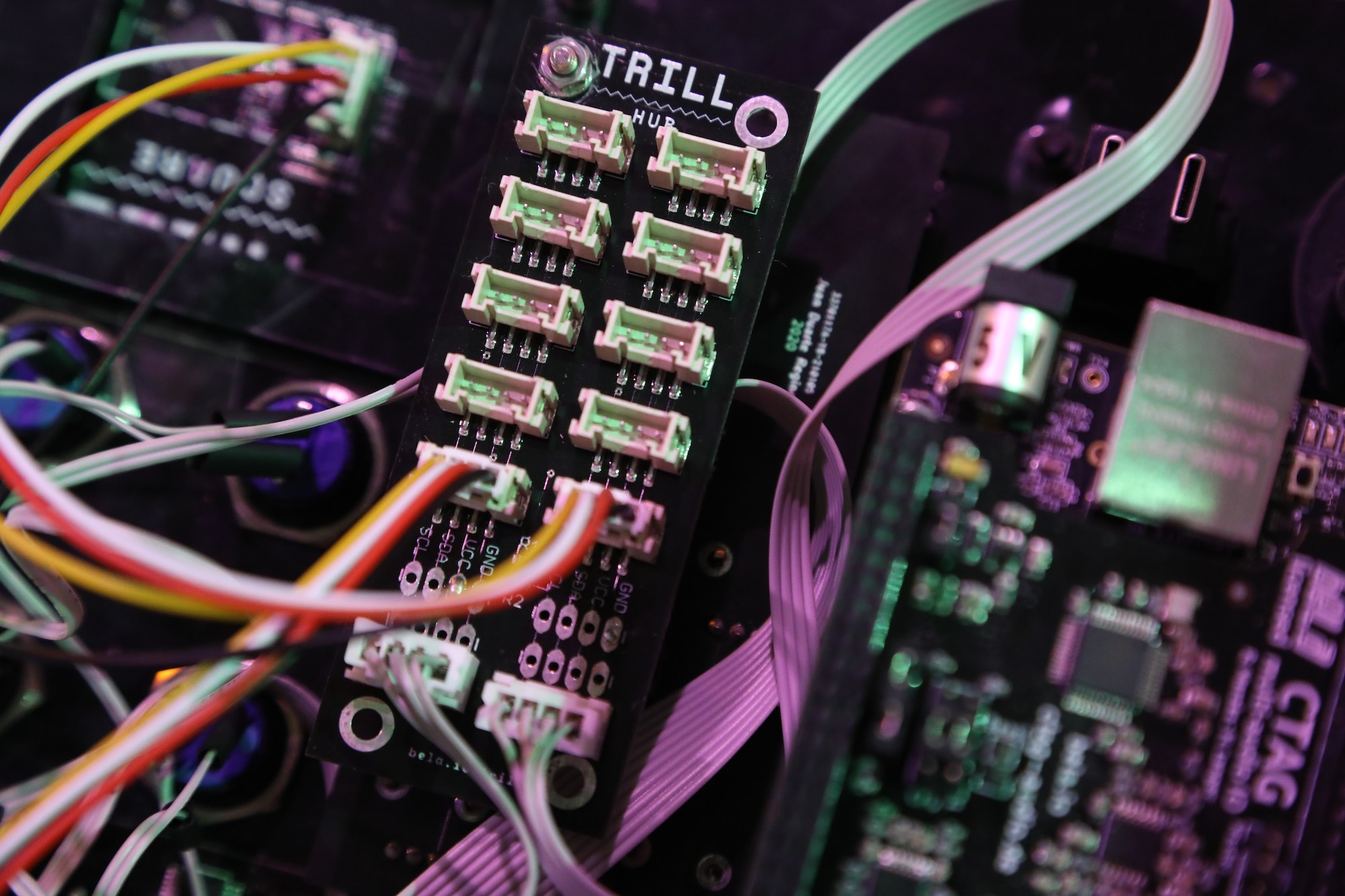

The expressivity of Trill is outstanding: they are quite sensible and adaptive to fit this instrument. A SparkFun Touch Potentiometer works as a master volume fader. All touch sensors are interconnected and handled with a microcontroller via the Trill Hub.

Trill Hub bringing together the I2C signals from the various touch sensors.

Menu Display

The instrument features a graphical user interface through an OLED display. It shows the current mode assigned to the inputs, touch sensors status, and volume levels.

The OLED display for parameter feedback.

Currently, this graphical user interface operates with an external microcontroller.

The OLED display also gives feedback on the status of the touch sensors.

Expansion Ports

The former design is meant as a spatialisation tool, furthermore I am focused to include environmental sensors and transmission/reception using Software Defined Radio. For this future expansion I have included a port for connecting external sensors or devices to the system.

Special thanks to Sonic Protest Paris, STEIM Amsterdam, Hacker in residence programme Bitwäscherie Zürich by Marc Dusseiller, Arts Promotion Centre Finland, Aalto University Fablab, Bettina Katja Lange and Macario Ortega.

About Juan Duarte Regino

Juan Duarte Regino is a Mexican-born artist-researcher working with environmental sound, exploring the entanglements between nature and technology. He creates artifacts and instruments that resonate with planetary energies and ancient cosmologies. His research explores augmented listening of environments, combining AI and remote sensing techniques. His work includes lectures, workshops, and performances in Europe, Asia, and Mexico. He has been a lecturer at Aalto University, a visiting researcher at IAMAS in Japan, a grantee of the National Endowment of Arts in Mexico, and Arts Promotion Center Finland.

For more of his work visit: www.juanduarteregino.com/